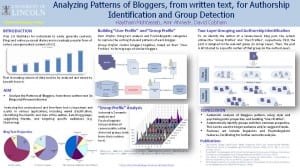

Analyzing Patterns of Bloggers for Authorship Identification and Group Detection.

Haytham Mohtasseb, Amr Ahmed*, David Cobham

- Poster – Click to download the PDF version.

INTRODUCTION

Web 2.0 facilitates for individuals to easily generate contents. Blogs and online personal diaries are increasingly popular form of online user-generated contents (UGC).

That increasing volume of data need to be analysed and mined to benefit from it.

AIM

* Analyse the Patterns of Bloggers, from there written text (in Blogs and Personal diaries)

Analysing this unstructured and free-form text is important and useful in various applications including mood classification, identifying the identify and style of the author, detecting groups, suggesting friends, and targeting specific audiences (e.g. Marketing).

Building “User Profile” and “Group Profile”

User Profile: Using text analysis and Psycholinguistic categories to capture the writing style and patterns of each blogger.

Group Profile: Cluster bloggers together, based on their “User Profiles” to form groups of similar bloggers.

“Group Profile” Analysis

Automatic Semantic analysis and Psycholinguistic interpretation of commonality within detected groups (only from their written text).

Two-Layer Grouping and Authorship Identification

To an identify the author of a (anonymous) blog post, the system utilises the “Group Profiles” and “User Profiles”, respectively. First, the post is assigned to the relevant group (in Group Layer). Then, the post is attributed to a specific Author of that group (in the Author Layer).

CONCLUSION

•Automatic analysis of bloggers pattern, using style and psycholinguistic properties, and building “User Profiles”.

•Automatically identify groups and their common properties. This can be used to target audience and/or suggest friends.

•Features set include linguistics and Psycholinguistic features, facilitating for further semantic analysis.

Publications

- Two-layered Blogger identification model integrating profile and instance-based methods

Mohtasseb Billah, Haytham and Ahmed, Amr (2012) Two-layered Blogger identification model integrating profile and instance-based methods. Knowledge and Information Systems, 31 (1). pp. 1-21. ISSN 0219-1377

- PSYCHONET 2: contextualized and enriched psycholinguistic commonsense ontology

Mohtasseb Billah, Haytham and Ahmed, Amr and Altadmri, Amjad and Cobham, David (2011) PSYCHONET 2: contextualized and enriched psycholinguistic commonsense ontology. In: KEOD 2011 – International Conference on Knowledge Engineering and Ontology Development, 25 – 29 October 2011, Paris, France.

- The affects of demographics differentiations on authorship identification

Mohtasseb Billah, Haytham and Ahmed, Amr (2010) The affects of demographics differentiations on authorship identification. Electronic Engineering and Computing Technology; Lecture Notes in Electrical Engineering 2010, 60 . pp. 409-417. ISSN 1876-1100.

- PsychoNet: a psycholinguistc commonsense ontology

Mohtasseb , Haytham and Ahmed, Amr (2010) PsychoNet: a psycholinguistc commonsense ontology. In: KEOD 2010 International Conference on Knowledge Engineering and Ontology Development part of IC3K, 25 – 28 October, 2010, Valencia, Spain.

- More blogging features for author identification

Mohtasseb, Haytham and Ahmed, Amr (2009) More blogging features for author identification. In: The 2009 International Conference on Knowledge Discovery (ICKD’09), 2009, Manila.

- Mining online diaries for blogger identification

Mohtasseb , Haytham and Ahmed, Amr (2009) Mining online diaries for blogger identification. In: The 2009 International Conference of Data Mining and Knowledge Engineering – The World Congress on Engineering, 1 – 3 July, 2009, London, UK.